While Google and other search engines are getting better at finding pages on their own, sitemaps still help by giving you more data about your web pages. There are many XML sitemap generators available for purchase, or even free of charge. They do what they should: crawl your site and spit out a properly formatted static XML sitemap.

The only problem with these XML sitemap generators is that they don’t know which URLs should (or shouldn’t) be in XML sitemap format. Sure, you could tell some of them to obey directives and tags, like robots.txt and canonical tags, but unless your site is perfectly optimized, you’ll need to work manually.

It is extremely rare to see a larger size based on a perfectly optimized database, thus producing a flawless XML sitemap from these tools. Parameters tend to create duplication or bloat on the page. Language directories are sometimes included inappropriately. Out-of-control folder structures tend to reveal useless process files and pages you didn’t know existed. The larger and more dynamic the site, the greater the likelihood of unnecessary page URLs.

At the end of the day, your XML sitemap should only expose the URLs that you really want Google to see. Nothing more and nothing less. The XML sitemap file is intended to help search engines get a data dump of all your important pages, to supplement what they did not find on their own. In return, this allows those “not found” pages to be found, crawled, and preferably (hopefully) ranked in search results.

So what should be in the ultimate XML sitemap?

- Only pages that 200 (page found). No 404 errors, redirects, 500 errors, etc.

- Only pages that are not blocked by robots.txt.

- Only pages that are the canonical page.

- Second level domain related pages only (i.e. no subdomains; they should get their own XML sitemap)

- In most cases, only pages that are the same language (even if all your language pages are in the same .TLD, that language usually has its own sitemap)

So in the end, the perfect XML sitemap file should 100% reflect what, in a perfect world, Google crawls and indexes. Ideally, your site has a process to create these perfect sitemaps on a routine basis, without your intervention.

As new products or pages come in and out, the XML sitemap should simply overwrite itself. However, the remainder of this post explains how to create a single XML sitemap for times when a sitemap prototype is needed or as a quick fix to a faulty sitemap generator.

What Tools Do You Need To Create An XML Sitemap?

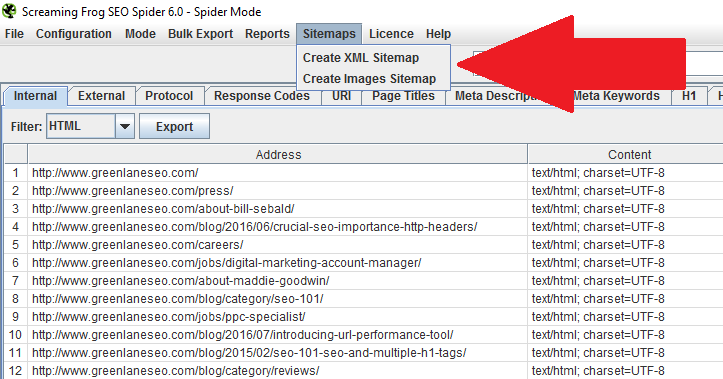

Screaming Frog is an incredibly powerful website crawler, ideal for all kinds of SEO tasks. One of its many features is the ability to export perfectly written XML sitemaps. If your export is large, it splits the sitemaps properly and includes a sitemapindex.xml file. While you’re at it, you can even export an image sitemap.

Screaming Frog is free for small crawls, but if you have a site with 500+ URLs, go for the paid version. This is a tool that you will be glad you paid for if you do SEO work. It costs just £ 99 a year (or $ 130).

Once you’ve installed it on your desktop, you are almost ready to go. If you are working on extremely large sites, you probably need to expand your memory usage. Out of the box, Screaming Frog allocates 512MB of RAM for use. As you can imagine, the more you crawl, the more memory you need. To do this, follow the steps.

Now that Screaming Frog is installed and loaded, you are ready to go.

Setting Up For The Perfect Crawl

Screaming Frog looks great, but it is very easy to use. In the Configuration > Spider setting, you have several checkboxes that you can use to tell Screaming Frog how it should behave. We are trying to get Screaming Frog to emulate Google, so we want to do some checking here.

Check the following boxes before running the crawl:

- Respect Noindex

- Respect Robots.txt

- Do not crawl nofollow

- Do not crawl external links

- Respect canonical

At this point, I recommend crawling the site. Think of this as the first wave.

How To Examine Crawl Data For Sitemap Creation

Export the full data from the Screaming Frog website. We will evaluate all Excel or Google Sheets pages. While we know that we have taken steps to show us only things that search engines have access to themselves, we want to make sure they are not viewing any pages that we are not familiar with.

Do you know those parameters? Color = on eCommerce sites or / search / URLs that you may not want to index. I like to sort the URL column from A to Z so I can quickly check and see duplicate URLs.

This data is very valuable not only for creating a solid XML sitemap but also for going back and blocking some pages on your site that need to be improved. Unless your site is 100% optimized, this is a valuable and difficult scan for potentially malicious URLs. I recommend doing this crawl and looking at your data at least once a quarter.

Scrubbing Out Bad URLs

In this case, a “bad” URL is simply one that we don’t want Google to see. Ultimately, we will have to make these additional exclusions available to Screaming Frog. At this point, you have two options.

- We can upload your clean Excel list to Screaming Frog,

- or run a new Screaming Frog scan with built-in exclusions.

Option 1: Using your spreadsheet, remove the rows that contain URLs you don’t like. Speed up the process by using Excel filters (that is, contains, does not contain, etc.). The only column of data that matters to us is the one with your URLs. Also, use Excel filters to show only the 200 URLs (page found). The time it takes to audit this spreadsheet depends on the number of URLs you have, the different types of URL conventions, and how comfortable you are with Excel.

Then copy the entire “good” URL column and go back to Screaming Frog. Start a new scan using the Mode> List option. Paste your URLs and start crawling. Once all the correct URLs are back in Screaming Frog, proceed to the next section.

Option 2: Now that you know the URLs you want to block, you can with Screaming Frog’s remove feature. Configure> Delete opens a small window for entering regular expressions (regex). New to regular expressions? No problem, it’s really that easy as Screaming Frog gives you great examples of which you just need to bend to your liking. https://www.screamingfrog.co.uk/seo-spider/user-guide/configuration/#exclude.

(Alternatively, you can use the include feature if there are certain types of URLs or sections that you specifically want to crawl. Follow the instructions above and just reverse them.) Once you have a perfect clue on Screaming Frog, skip to the next section below.

Export The XML Sitemap

At this stage, you have chosen Option 1 or Option 2 above. You have all the URLs you want to index loaded into Screaming Frog. You just need to do the simplest step yet: export!

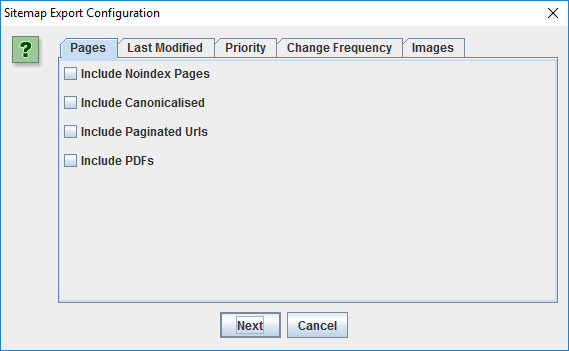

You have some additional checkboxes to consider. A very clever set of selections, if you ask me.

This helps you to really refine what is included in the XML sitemap, in case you missed something in the steps above. Just select what makes sense to you and run the export. Screaming Frog will generate the sitemaps for the desired location, which is ready to upload to your site. Don’t forget to put these new sitemaps in your Google Search Console and in the Bing Toolbox sitemap uploader.

(If you need some clarity on what these definitions are, visit http://www.sitemaps.org/protocol.html)

Ready. Remember, this is just a snapshot of your ever-changing website. I still fully recommend a dynamic XML sitemap that updates as your site changes. I hope you have been helpful.